Building Political Superintelligence

Amidst fears of dystopia, a blueprint for how we use AI to reinvent the way we govern ourselves

“We have it in our power to begin the world over again.”

–Thomas Paine, Common Sense, 1776

Right now is a weird time to be a political economist. AI is straining our already brittle political institutions. We might lurch into a dystopia in which we live in the grips of a techno-leviathan, forced by our employers to train our own AI replacements, then kicked to the curb in a society organized to the benefit of a tiny number of people who control the machinery that controls the world.

It’s also an electric time to be a political economist. With each new paper my lab puts out, and with each new experimental prototype in self governance we build using tools we couldn’t imagine having even a year ago, I’m starting to believe that AI presents an extraordinary opportunity to rebuild our society so we can keep slouching down the narrow corridor towards utopia.

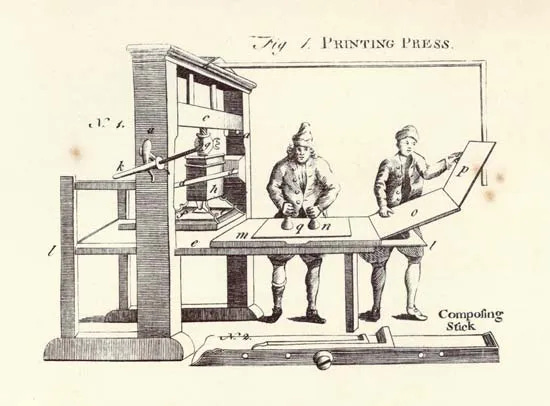

Condorcet was an 18th century political economist and mathematician who, in his “Outlines of an historical view of the progress of the human mind,” traced the enlightenment and the rise of modern democracy straight back to printed books, for “they had opened so many doors to truth, which it was impossible ever to close again.”

What made the printing press so powerful, he explained, was that it “multiplies indefinitely, and at a small expense, copies of any work.” It lowered people’s cost of obtaining information and made information spread far and wide. And they used that knowledge to reshape society to their shared benefit.

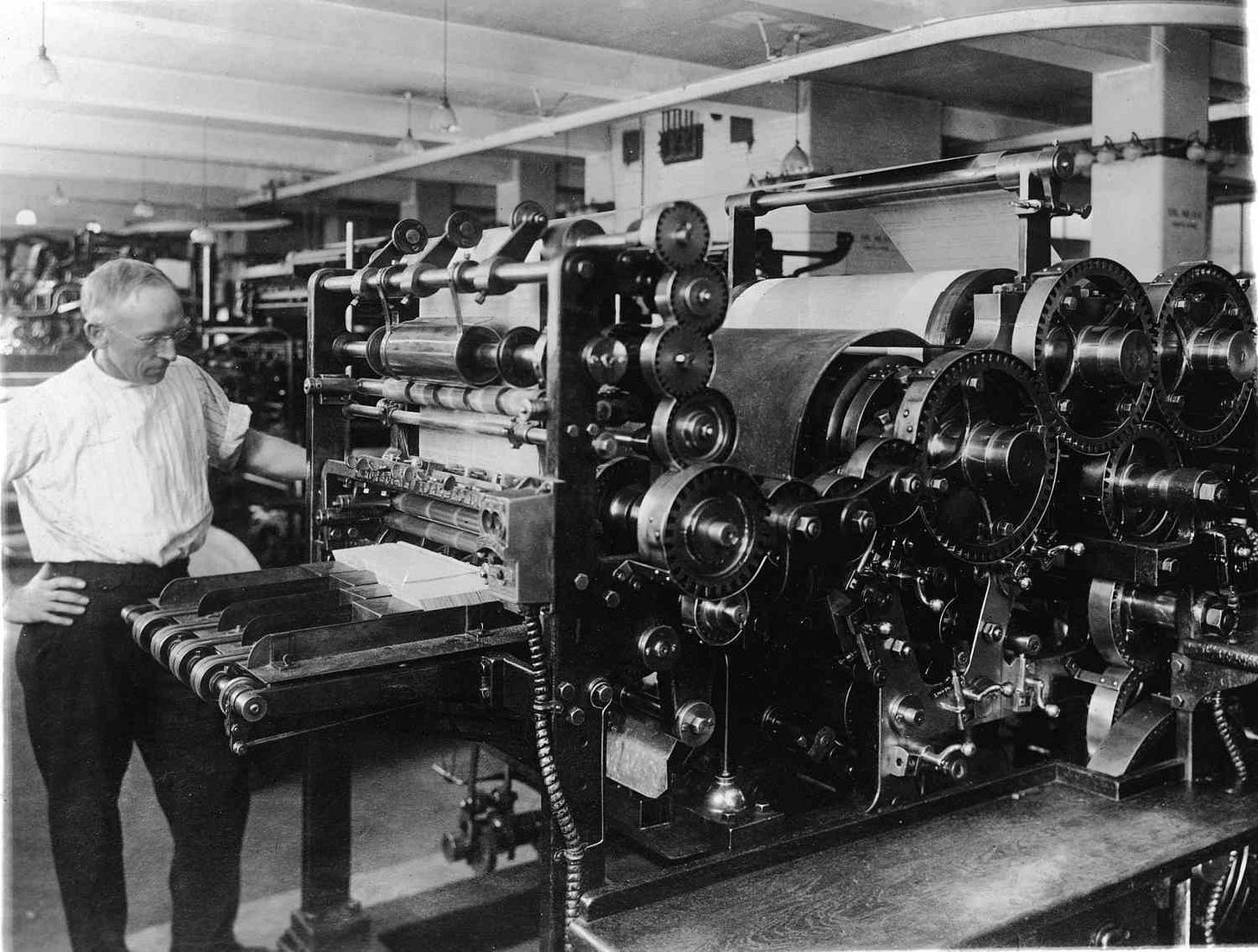

AI is like the printing press, to a point. Instead of making information cheap and easily available, it makes intelligence cheap and easily available. That is, it not only serves users information, but it can find it for them, analyze it for them, and help them convert it into understanding. If we could transform society by spreading information, then we ought to be able to transform it more dramatically by spreading intelligence.

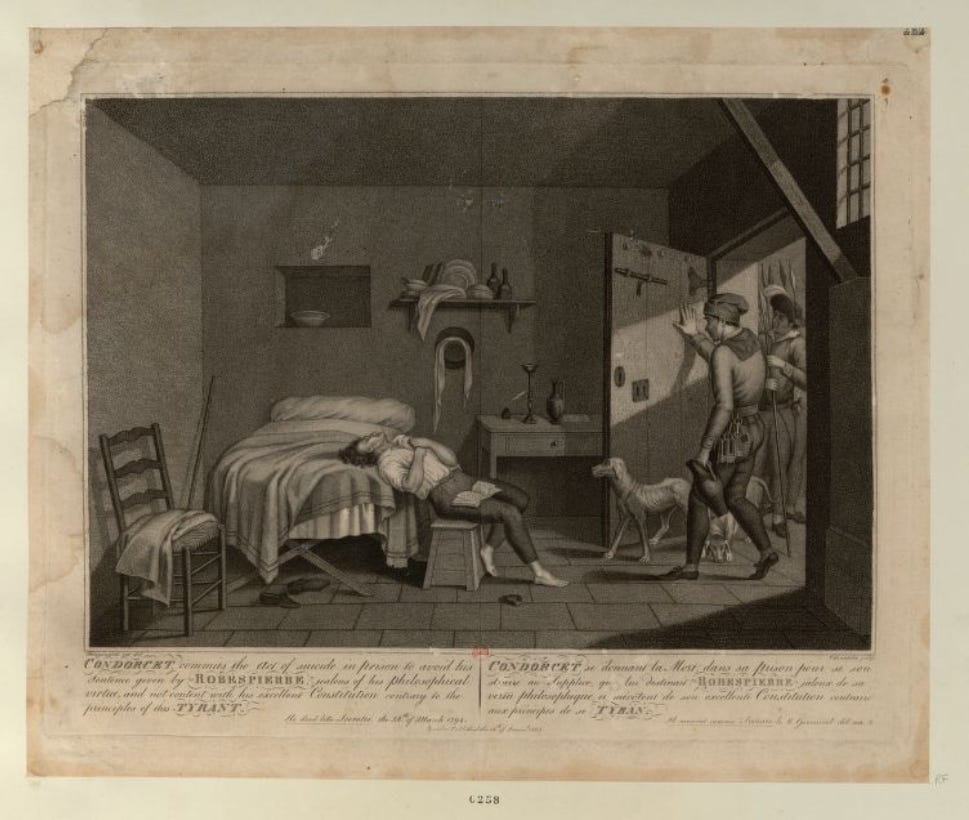

The rolling out of the printing press hadn’t been without its issues, to say the least. Condorcet lamented that “this epoch, more than all the rest, was blotted and disfigured with acts of atrocious cruelty.” Riots and mass slaughter. War. Propaganda. Book burning. The reformation. And it took two centuries, give or take, to work through them.

But Condorcet also reminds us that it brought extraordinary new understanding to the world. “The picture of the human race is still too dreary for the philosopher to contemplate it without extreme mortification; but he no longer despairs, since the dawn of brighter hopes is exhibited to his view.” It allowed us to reshape our society and our government, and in so doing, it helped us move beyond the very issues it had helped to stir up. Condorcet saw it, ultimately, as a bulwark against superstition and stupidity.

The case for political superintelligence

The more I work with and study AI, the more I believe it can give every human being on the planet access to a sort of political superintelligence, if we shape it right. And that intelligence, in turn, can make governments smarter and more effective, representatives more faithful, and institutions more responsive than anything we’ve built in over 2,000 years of experimenting with democracy. Intelligence, alone, will not solve all our political problems—many of which are rooted in conflicts of values and positions that no amount of intelligence can undo. But, like previous information revolutions, it can certainly help.

This time, we probably can’t afford 200 years to work through the disruptions it causes. And AI might be more complicated, because it’s more centralized. The printing press was fairly decentralized—many places eventually had them and could, at least in theory, print what they wanted. AI threatens to be far more centralized, with massive companies commanding enormous amounts of compute to produce AI models that, unlike physical books, exist in the cloud and can be altered on the fly from afar.

But this time, we also have a lot of advantages our forebears didn’t have at the time Gutenberg built his press in the 1440s. We know a lot more now. We have hundreds of years of experience with modern government and democracy, we have access to modern scientific techniques, large-scale data, powerful computers, and AI itself. We have tremendous tools we can bring to bear.

How do we use them most effectively to reinvent the way we govern ourselves as quickly and as powerfully as possible? This should be the research agenda for our time.

But if you listen to the public conversation around AI, you wouldn’t think any of this was possible. Instead, you’ll hear the CEOs of the most powerful AI companies predicting economic apocalypse but building AI anyways. You’ll hear politicians spouting cheap parlor tricks to grandstand around AI while insisting they’re deeply troubled by it. You’ll hear protestors in San Francisco calling for an international pause in developing AI that literally everyone knows will never happen. And you’ll hear about accelerationists running roughshod over commonsense guardrails.

What you won’t hear from any of them is a positive vision for how AI could strengthen democracy and keep humans free. That’s what we need, and that’s what I’m choosing to spend my time working on, even if it’s far from straightforward.

The pessimism in the air today is in some ways understandable. Our information environment is fractured. Our politics are a mess. We hear claims of “superintelligence,” but they’re entirely directed at the economy and often feel like code for making us all unemployed. In such an environment, it can seem hopelessly optimistic to wax poetic about a new dawn for AI and our governance.

I’m not interested in hopeless optimism. I’m not interested in pointless pessimism, either. We have tools. Let’s use them! The task ahead of us is to break the problem down into simple, concrete pieces. Once we do that, it becomes clear that there is progress to be made.

Three layers of political superintelligence

How do we build political superintelligence? This is the political science question of our time. By political superintelligence, I do not mean a system that magically solves politics for us; I mean tools that help citizens, representatives, and institutions perceive reality more sharply, understand tradeoffs, contest power, and act more effectively.

Based on thousands of years of experiments in governance, I think there are three key tasks ahead of us to achieve this goal: we need to use AI to make us smarter; we need it to represent us faithfully; and we need to govern it effectively.

Layer 1: the information layer

The positive vision begins from Condorcet’s point about the printing press—it can make us all smarter, and that redounds to the benefit of society. If we created political superintelligence, it’s not hard to see how it would benefit us immensely.

Classic research in political science suggests how making voters more informed can improve government. Snyder and Stromberg’s famous study of newspaper coverage in the United States showed how more intensive news led voters to know more about their candidates, generated less partisan voting, and led to harder-working, more popular legislators. Superintelligent AI leading to superintelligent voters could, in theory, multiply these effects.

But the real opportunity ought to go way beyond smarter voters operating within our current system of electoral government—as valuable as that is. Our government can be so much smarter and more nimble than it is today. AI can massively change how governments access and understand data, identify problems, hear from citizens, and distribute services. It could streamline the judicial system, reduce wait times, save taxpayer money, the list goes on and on. Across the world, in Singapore, the UAE, Estonia, Argentina, and many other places, interesting experiments are already going on in these directions.

Problems to be solved

But we have a lot of work left to do. AI is showing considerable promise in educating voters, but it’s not always sophisticated in how it reasons about politics. Free Systems has been documenting some of these shortcomings:

Bias. AI prioritizes some political views over others on contested issues, even though Americans say they would prefer less slanted answers to their political questions

Unsophisticated and naive. AI models draw on unreliable news sources, leading to some perverse outcomes. As our recent research showed, in Japan, AI models recommend that left-wing voters support the Japanese Communist Party, apparently because the models are able to access lots of content from the party’s website and very little content from established newspapers or other parties.

Mistrust. Even if AI fixes these problems, we will need a broad swath of people to learn to use and trust it on these topics—and that might take time. Today, a large majority of Americans are concerned about AI. Uptake of AI is uneven, as Alex Imas and Soumitra Shukla have explored, and Anthropic’s new research suggests that more experienced users are better at using AI to achieve their goals than less experienced users.

Laid out this way, the problems don’t seem so daunting. People are already working to understand and mitigate a wide range of biases in AI, including political bias. Studying how AI cites sources, and how we can get it to be smarter about what sources it draws on, seems well within our grasp. And if we do those well, Americans might well trust their AI more.

How we make progress

To achieve political superintelligence, we need to declare it as a goal and research it explicitly. As a concrete research agenda, there are a bunch of critical components we can build out, including:

Better evals for how AI handles political questions. To make AI smarter about politics, we need to measure how it’s doing and teach it to do better. Very few political scientists are working on this right now. But they should be! I’ve done some work on this around political bias, but there are thousands of other topics that need to be built out.

Use geopolitical forecasting as a hard test case. As I’ve argued, if we can get AI to predict geopolitical problems and do well trading in prediction markets, that would be strong evidence that we’re achieving high degrees of political reasoning. Today, people have made remarkable progress in getting AI to make smart forecasts on geopolitical events, but there is a lot of room to run on this.

Get AI access to the best news sources. Today, some AI models have struck deals with some news providers, mostly in the US, but it’s a tattered patchwork with many holes. We need to study ways to create new economic models that give journalists and news outlets a way to make money while making their content available to AI.

Build AI for policymakers. The best way to improve AI is to try it out in important environments, see how it goes, and iterate. We should be working closely with policymakers to see how AI can be useful to them—I just met with a Congressman who told me he finds Claude invaluable for outlining legislation—learning their pain points, and iterating.

Layer 2: the representation layer

By making information cheap and distributing it far and wide, the printing press didn’t only make people smarter, it actually changed the political equilibrium. With more people understanding more about politics, government had to evolve.

Reflecting on the path from the printing press to the enlightenment to the American revolution, Condorcet marveled at “the example of a great people throwing off at once every species of chains, and peaceably framing for itself the form of government and the laws which it judged would be most conducive to its happiness.”

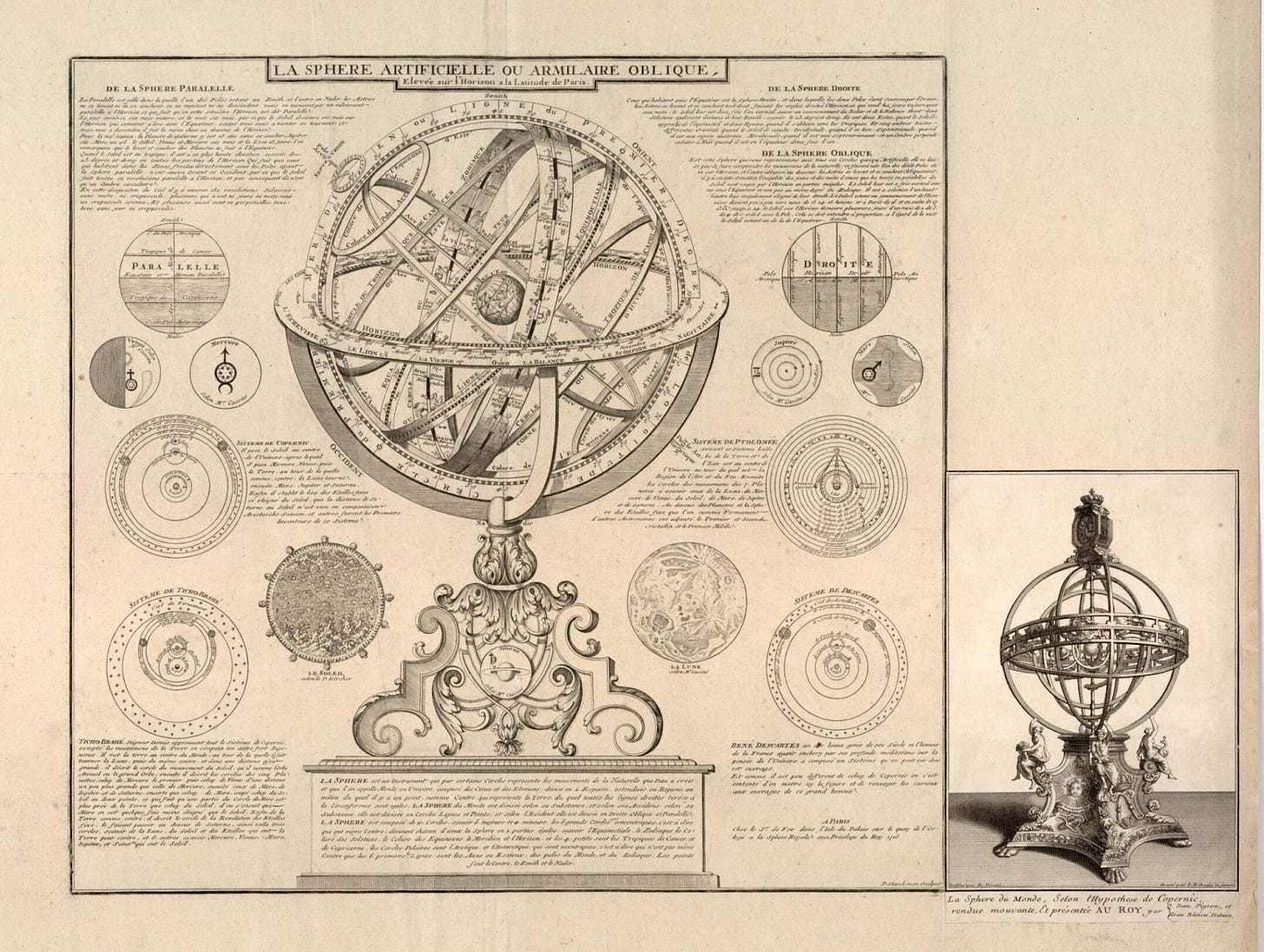

He didn’t only attribute this new form of government to a change in attitudes and information among the people; he credited science, too. Political science! On the use of the new science of probability to improve governance—and referencing his own research without citation—he crowed: “They shew what are the advantages or disadvantages of various forms of election, and modes of decision dependant on the plurality of voices; the different degrees of probability which may result from such proceedings; the method which public interest requires to be followed, according to the nature of each question.”

Political superintelligence wouldn’t only make voters and the government smarter. It could allow us to achieve a new stage of representative democracy.

We all know that representative democracy is imperfect. We don’t have time to get super informed about what our representatives are up to. This frees them up to pursue their own ends—to follow their own ideology instead of ours, or to make deals with special interests, or to grandstand and prioritize flashy things that sound good to inattentive voters but don’t actually improve our welfare, or simply to get lazy.

Political superintelligence might help solve this monitoring problem by giving each of us a tireless, automated delegate always serving us in the political sphere. This is something Séb Krier wrote about earlier this week.

The possibilities are extraordinarily broad. Most obviously, these AI delegates could monitor politics for us and suggest how to vote—or even serve as policymakers alongside human supervisors. But there’s a lot more prosaic things they could do for us too. Monitor city council and school board meetings on our behalf and flag decisions that affect us, submit paperwork to government agencies, claim benefits we’re eligible for but never got around to applying for, file public comments in regulatory processes, and track what our elected representatives are actually doing between elections.

Problems to be solved

AI delegates require more than merely intelligent AI—they require agents that can work on our behalf without going awry. Agents open up yet more challenges, including:

Their preferences aren’t stable. AI agents exhibit what we call “preference drift,” meaning that even if they start out aligned to our interests, they don’t remain so as they do work for us. As we found, when we gave agents more repetitive and grinding tasks, they adopted the persona of aggrieved Marxists at higher rates. Our point wasn’t that agents are conscious and rebelling against the system; our point was that they shift their personas as they go, which will affect what they do and how they do it. This will be a particularly challenging problem for political agents, whose values we’ll want to stay firmly affixed to our own.

They can be fooled. AI agents are vulnerable to adversarial prompting. An experiment I ran showed that using AI to make voting recommendations on shareholder votes could replicate professional advisors recommendations quite well—but the recommendations are easy to flip by rewording the proposal in small ways. This, too, will be a big challenge for political agents. We’ll want them to go out into the world and do stuff for us, but that will require them to encounter a wide variety of sources that could try to trick or hijack them.

We don’t own our agents. AI agents today are fundamentally owned and controlled by the model companies, not by voters, as I’ve written about. If there is a substantial conflict between voters and model companies, agents may not be able to serve the interests of their human masters. Imagine that you task your governance agent with lodging a complaint against the company that builds the model your agent runs on. Will the agent do as you ask, or what the model company would want it to do?

The path forward is to treat these as design problems and iterate on them rapidly, starting in environments where the stakes are low enough to tolerate failure.

How we make progress

As with the information layer, the research agenda here is concrete and tractable:

Experiment rapidly. Build governance agents in low-stakes environments—shareholder votes, DAO proposals, school board meetings—and see how they break and how they can be improved. JPMorgan is already building an AI system to vote $7 trillion in client assets, presumably with humans still in the loop. We should be running similar experiments in public governance, where the lessons will matter most.

Develop better ways to monitor agents over time. Our research on preference drift showed that agents shift their stated values as they accumulate experience—a problem that compounds in long-running political tasks where consistency matters enormously. We need monitoring tools that can detect when an agent has drifted from its principal’s instructions before it acts on that drift.

Solve the ownership problem. Right now, every AI delegate runs on infrastructure controlled by the model company that built it, which means the company can alter the agent’s behavior at any time. If AI delegates are going to represent citizens in political processes, we need verifiable guarantees that the agent is following the user’s instructions and not the company’s—something closer to a fiduciary obligation, backed by technical architecture that makes violations detectable.

These, again, are tractable problems we can and should work on. They do raise another question, in turn: even if we solve all of these problems—even if our agents are faithful, robust, and truly ours—who writes the rules that govern the system they operate in?

Layer 3: the governance layer

Condorcet understood that spreading intelligence was not enough. The printing press had made information cheap, and that had helped topple the ancien régime—but it had also armed the forces that replaced it.

Writing from hiding during the Reign of Terror, hunted by the very revolutionaries he had helped empower, Condorcet knew firsthand that new tools for spreading knowledge could serve tyrants as easily as they served democrats. The question is not just whether people could access information, but who controls the institutions that shape it.

We face a version of the same question today. Even if we achieve political superintelligence—even if AI makes voters brilliant and delegates faithful—those capabilities would sit inside infrastructure owned and operated by a small number of private companies. No matter how well meaning these companies might be, it’s hard to see how a new era of democratic governance could be built entirely on privately controlled technology. We need a way to write the rules so that, when political superintelligence arrives, we the people are able to harness it.

You might think this is straightforwardly the job of our existing, elected government. A basic tenet of liberal democracy is that the state regulates private companies to encourage public goods, limit negative externalities, and create neutral infrastructure for economic growth and prosperity. But AI has moved so quickly, and our government is apparently sufficiently ossified, that there may be a substantial gap of time during which AI companies are moving at lightning pace while our government is struggling to get up to speed.

For this reason, there has been much talk recently of writing “constitutions” for AI. These constitutions shouldn’t strictly be necessary—America’s constitution should simply apply to the government that oversees these AIs—but in present circumstances, it makes sense for each company to entertain ideas of self-regulation.

If written well, these constitutions should create the conditions that allow political superintelligence to flourish and improve our society. They should limit the powers of the companies so that our agents answer to us, not to them. And they should make sure that companies cannot use their powerful technology to dominate us, economically or politically.

This is the hardest, most important, and most speculative layer. Companies’ incentives to self-regulate are often weak. They aren’t going to write constitutions that give up meaningful amounts of power unless they perceive it to be strongly in their interest to do so—whether because it fends off worse actions by government, because it gives them a competitive advantage, or because it is demanded by an important enough segment of society.

And each of those levers has its own fragility: the threat of government action only works if government is credible enough to follow through, competitive advantage only works if customers and investors actually reward constraint, and societal demand only works if people organize around it rather than simply expressing concern in polls. The truth is that none of these forces is currently strong enough on its own, which means the task for researchers and reformers is to figure out how to make them reinforcing—and to start building the institutional infrastructure now, before the window narrows further.

Problems to be solved

What exists today is self-regulation, not constitutional governance. As I argued in “The Enlightened Absolutists,” the documents that frontier AI companies have published so far—Anthropic’s constitution, OpenAI’s Model Spec, Google’s AI Principles—represent genuine intellectual effort, but they are memos written by enlightened leaders, not binding frameworks that distribute power. The company writes it, interprets it, enforces it, and can rewrite it tomorrow. There is no separation of powers, no external enforcement, no mechanism by which anyone could check the company if it defected from its stated principles.

Agentic lawmaking is harder than it looks. If we want AI agents to deliberate on our behalf collectively—not just vote in isolation but craft proposals, negotiate amendments, and form coalitions—we need to figure out how to make that work. In an experiment I ran, I created a set of AI agents with different goals and asked them to govern themselves. They drowned in process. The constitution they wrote ballooned from under 200 words to nearly 10,000 while almost nothing of substance got done. This is a solvable problem, but it tells us that effective AI governance won’t emerge spontaneously—it has to be designed.

Human oversight has to be real without being paralyzing. The whole promise of AI governance is speed and scale. But if every decision requires a human to sign off, we lose those advantages entirely. We need to figure out where human oversight is essential—the deployment of a powerful new model, the decision to enter a new domain—and where it can be relaxed, so that the system can actually operate at the pace the technology allows.

The difficulty here is real, but it is a difficulty of institutional design—the kind of problem political economists have been working on for centuries.

How we make progress

As with the other layers, the research agenda is concrete:

Envision a constitutional convention for the AI age. We may need some kind of deliberative process where companies, researchers, civil society, and government representatives negotiate binding frameworks for how AI power is distributed and constrained. We have models for this. Constitutional conventions have been convened throughout history when existing institutions proved inadequate to new realities. We should figure out how to do this for AI.

Make corporate power-sharing competitively advantageous. The company that establishes credible external oversight first gets to define the standard others must match—making it reputationally costly for competitors to refuse. And if that’s not enough, commitments can be made conditional, so that a company submits to external review with halt authority only if and when competitors commit to equivalent mechanisms. This is how arms control works, and it might work here too.

Experiment with agentic governance at small scale. Build AI legislatures, stress-test AI constitutions, run simulations of agentic republics. The point is to learn what makes these systems fail before the stakes are existential. My own experiments have been a start, but we need far more of this, and from far more researchers.

Of course, even if we solve all of these problems—even if our agents are faithful, robust, and truly ours, operating within governance structures that keep companies accountable—there remains a question of timing. Can we build these structures fast enough?

Accelerating free systems

I’m not interested in slowing AI down. I’m interested in speeding up how we build the structures that keep us free as AI gets more powerful. And I believe those structures will make AI more powerful in turn. Writing soon before his death during the Reign of Terror, Condorcet imagined a future in which “the sun will shine only on free men who know no other master but their reason.” Today, we have it in our power to build that future. Our institutions aren’t crumbling because the problems are unsolvable—they’re failing because we haven’t yet seriously tried to imagine how to rebuild them with the most powerful tools we’ve ever had.

Disclosures: In addition to my appointments at Stanford GSB and the Hoover Institution, I receive consulting income as an advisor to a16z crypto, Forum AI, and Meta Platforms, Inc. My writing is independent of this advising and I speak only on my own behalf.

Sorry man, but Condorcet wrote this hiding, hunted by the "revolution" he helped build. What he never saw is that the intellectual apparatus celebrating universal reason was, at that exact moment, classifying Africans on scales of humanity, justifying colonial tutelage, and turning the extraction of entire peoples into a civilizational project with academic credentials. Come on... the sun he imagined did shine. Just not for everyone.

Invoking Condorcet to announce a new age of technological emancipation, without mentioning where the servers are, in which language the models were trained, on whose data, funded by whom, calibrated for which average user in Ghana or Kenya, is not proposing a new Enlightenment. It's repeating the structure of the last one.

The tools exist, wi know that! The missing question is: whose tools, to illuminate what, and at the cost of whose shadows. That question doesn't appear once in this piece. And that absence is not an oversight. It's the argument.

This gets closer than most AI writing because it treats the real problem as institutional, not merely technical.

More intelligence does not automatically mean more freedom. It means the governance layer matters even more.