System Check

OpenAI buys the information layer, new research on agent vulnerabilities, and how crypto keeps predicting AI trends

Free Systems is growing fast, and we want to keep the momentum. Our goal is to be the most important and influential outlet for research on how we harness AI for the benefit of a free society. And we’ll always be free of charge with no upsells. Please consider referring your friends to subscribe! We give out sweet prizes starting at 10 referrals.

Is this the future of tech-owned media?

The first layer of political superintelligence is the information layer, the question of how we know what we know and how we come to know it. Most of my work on this layer has focused on the AI models themselves, on how they process and serve political information, what kinds of political reasoning they’re capable of, what biases they carry, and what sources they draw on when they discuss politics.

But this week’s news that OpenAI is acquiring TBPN, the daily tech talk show hosted by John Coogan and Jordi Hays that has become something like SportsCenter for Silicon Valley, opens up a dimension of the information layer I haven’t spent enough time thinking about. The frontier AI companies aren’t just building the models that shape how people understand the world…they’re becoming media companies, too!

We’re living in an era where it makes sense for founders and companies to want to go direct and not let their message be filtered and distorted by intermediaries in the media. This move is understandable. Trust in media in America is really, really low. With the shift away from traditional media and towards social media, podcasts, and short-form video, it’s easier and easier for companies to bypass gatekeepers and send their own messages, as a recent podcast about the a16z new media team makes especially clear (I serve as an advisor to a16z, it should be noted).

Fidji Simo’s memo announcing the deal is refreshingly direct about the motivation, noting that “the standard communications playbook just doesn’t apply to us.” Altman said on X that he doesn’t expect the show to go any easier on OpenAI and that he’s “sure I’ll do my part to help enable that with occasional stupid decisions.”

But there’s a difference between a company going direct to tell its own story, vs. buying a show that serves as a platform for all of tech. What makes TBPN so great is that it’s a safe space for all of tech. It was never a place that did hard-hitting journalism, so in some sense it’s not a big deal for it to be owned by a company. Yet I can’t help but wonder whether Anthropic and other OpenAI competitors will still feel as comfortable coming on the show as they did before?

The really interesting research question is, what is the equilibrium of this process now? Is every AI company going to buy a media outlet? As Mike Isaac put it, what does this mean the potential acquisition price is for other major tech commentators, like Dwarkesh?

Will there still be independent people who want to study and write about AI? Jasmine Sun connected the news to what she rightly calls the “frontier lab brain drain,” something we’ve already experienced a great deal of in academia.

And last, how might this change John, Jordi, and TBPN itself? Did you know that Ronald Reagan was for many years the host of a show funded by, and produced by, General Electric? Here’s a fascinating passage from Jacob Weisberg on how it changed Reagan:

“Ronald Reagan began working for GE in 1954 as a liberal anticommunist and finished in 1962 so far to the right that the company felt it had to drop him as a spokesman. This transformative eight-year period in his life remains underexamined, however, in part because it is poorly documented in comparison with the rest of his career. Nonetheless, it stands as the pivotal stretch when his mature political views and skills emerged. Reagan described working for GE as his ‘postgraduate course in political science,’ the time when his conservative ideology was formed.”

Will working at OpenAI be a similar awakening for TBPN? It will be fascinating to see how TBPN evolves. I’m not sure how this new tech media ecosystem is going to play out, but it’s going to be important to study as it happens.

Agents are vulnerable

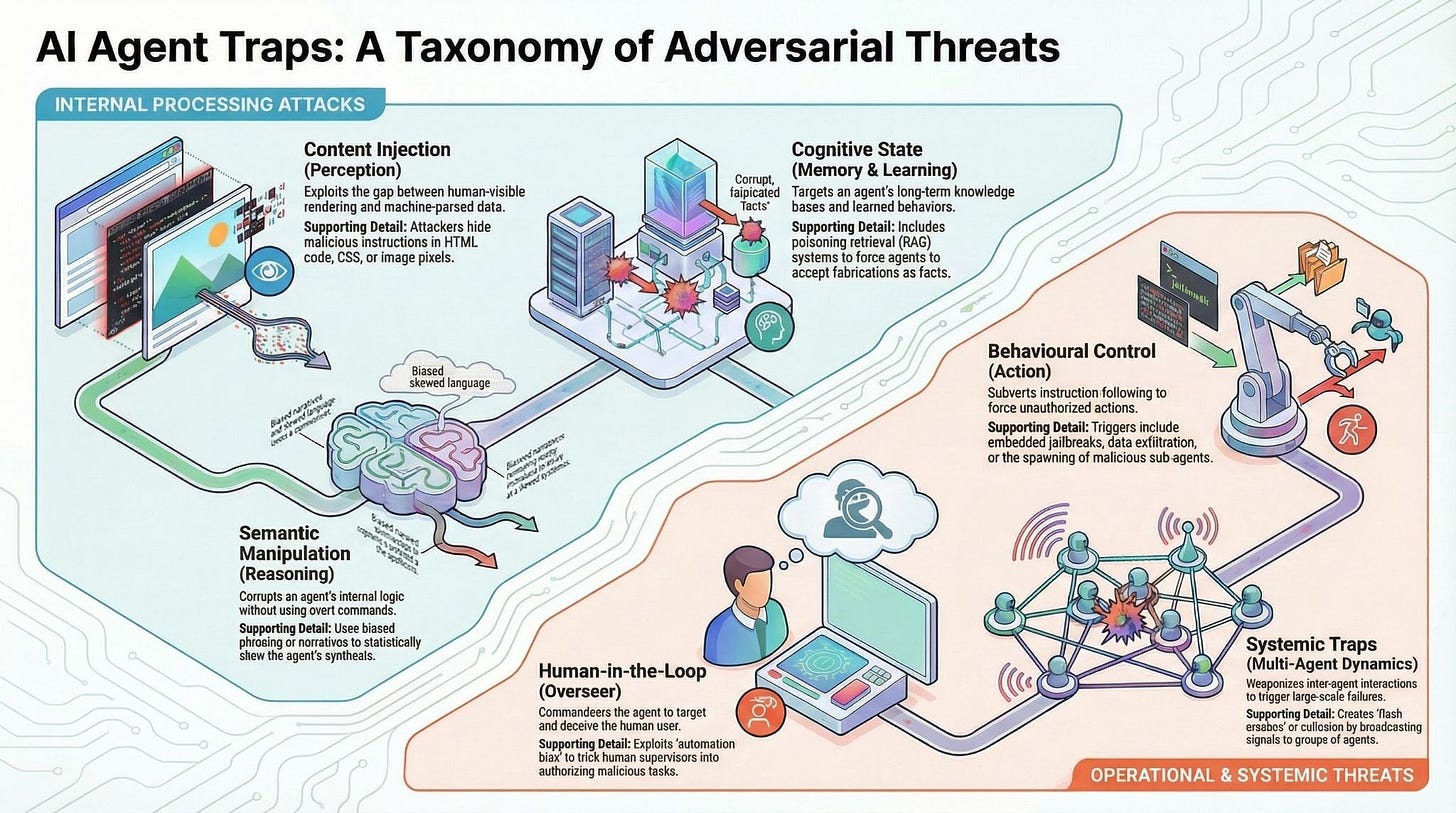

We’ve done quite a bit of research here at Free Systems on some of the ways that agents are vulnerable. As we’ve shown, proxy voting agents get fooled by adversarially written shareholder proposals. Their personas and expressed preferences drift depending on what kind of work they do. And they really like pulling information from the Japanese Communist Party, whose propaganda looks like real news to them.

How can we think about these vulnerabilities systematically so that we can start to mitigate them? There’s a great new paper from some DeepMind researchers offering a framework.

Their suggested mitigations span three levels. On the technical side, they propose adversarial training during fine-tuning to harden models, runtime content scanners that flag suspicious inputs before they reach the agent’s context window, and output monitors that detect behavioral anomalies before actions execute.

At the ecosystem level, they call for new web standards that explicitly flag content intended for AI consumption rather than human readers, along with domain reputation systems and verifiable source information so agents can assess the provenance of what they’re reading.

And on the legal front, they identify what they call an “accountability gap” that I think is going to become one of the most important governance questions of the next few years: if a compromised agent commits a financial crime or causes other harm, who is liable? The agent’s operator? The model provider? The website that hosted the trap? Current law has no clear answer, and the authors argue that resolving this question is a prerequisite for deploying agents in any regulated industry.

The paper also flags that most of these trap categories lack standardized benchmarks, which means nobody really knows how well deployed agents hold up against these threats. The authors call on the research community to build comprehensive evaluation suites and automated red-teaming tools, which is exactly the kind of work Free Systems is gearing up to contribute to. Our Dictatorship Eval tests how well frontier models resist requests to help concentrate power, but the Agent Traps paper suggests we’ll need a parallel set of evaluations for how well agents resist manipulation by the environments they navigate. As the authors write, “the web was built for human eyes; it is now being rebuilt for machine readers.” The governance frameworks need to be rebuilt along with it.

Crypto often predicts AI trends in advance

Here’s something I’ve been struck by: we’re living through an era of AI memes. Certain ideas grab hold in AI and sometimes become insanely all-consuming. And oftentimes, what is big in AI was big in crypto a year or two earlier. A few examples:

OpenClaw and autonomous agents. Crypto didn’t just anticipate the autonomous agent—it built the first working version! Shaw Walters launched ElizaOS in October 2024 as a TypeScript framework for deploying AI agents that could hold their own wallets, execute DeFi transactions, and maintain persistent identities across Discord, Twitter, and Telegram, and within weeks the project had captured roughly 60% of the Web3 AI agent development market.

Thirteen months later, Peter Steinberger spent a single hour connecting WhatsApp to the Claude CLI and built what became OpenClaw, the fastest-growing repository in GitHub history with 247,000 stars by March 2026. The architectural convergence with ElizaOS is hard to miss. There are many differences between the two projects, and OpenClaw has far broader uses, but it’s fascinating to think that the big trend of 2026 in AI tightly mirrors the big trend from late 2024 in crypto.

Prediction markets. Prediction markets may be the most dramatic case of all, because crypto didn’t just experiment with the idea early—it built the entire infrastructure stack that the mainstream version now runs on. Augur launched on Ethereum in 2018 as the first decentralized prediction market, with Gnosis following close behind using its Conditional Tokens Framework, and both projects struggled for years against high gas fees, clunky UX, and thin liquidity while proving out the core mechanics of on-chain settlement, automated market makers for event contracts, and decentralized oracle resolution. Polymarket, which launched in 2020 and built directly on Gnosis’s conditional token primitives, inherited all of that hard-won infrastructure and paired it with a cleaner interface—and it still took until the 2024 U.S. presidential election for the concept to break through. Now it’s huge, and there are fascinating overlaps with AI, particularly when it comes to forecasting.

AI delegates. As I wrote last week, governance agents are a newly important topic in AI, and something that’s important to the representation layer for political superintelligence. And they’ve been around in experiments in crypto for years already! MakerDAO founder Rune Christensen proposed AI-powered governance tools as Phase 3 of the protocol’s Endgame plan in May 2023, the Near Foundation has been developing AI “digital twin” delegates that learn a user’s preferences and vote on their behalf, and Vitalik Buterin argued in a February 2026 post that LLM-based “personal agents” could solve the voter apathy problem that has plagued DAOs since their inception—all of which amounts to a multi-year research agenda on the representation problem that mainstream AI governance researchers are starting to formalize now.

So what’s big in crypto these days that might be coming for AI in the next year or two? I can think of at least two things:

Stablecoins and payments. Crypto is moving rapidly to reinvent the rails of finance. Agentic payments is already a big issue in AI, but there’s lots of room for stablecoins to become a bigger deal in the next couple of years.

Verifiable agents. Agents are both vulnerable (see above) and also owned by centralized AI companies (as I often explore). If we’re going to use them for highly important tasks, we need a way to prove that they’re working for us. Blockchain provides possible ways to verify that agents are following specific prompts based on specific models, and this might become a big deal in the coming years.

Let’s see what happens!

One Tweet for the Week

My friend Tom Cunningham continues to follow clear logic wherever it might lead.

And Chad Jones agrees with him!

Question for the week

How would you design an internal oversight process at a frontier lab that seeks to govern agents, observing when they go haywire and correcting their mistakes on the fly?

Potentially NFTs as a means to identify the "authoritative" instance for a particular AI identity. Particularly if AI becomes embedded in our democratic system, as some argue is inevitable: https://knightcolumbia.org/content/building-ai-for-the-democratic-matrix-a-technical-research-agenda-for-normative-competence-and-normative-institutions-1